[EXP] Ollama AI Infrastructure Exposure Memory Disclosure Risk in Self-Hosted Model Servers

Report Type:

EXP

Threat Category:

AI Infrastructure Exposure / Sensitive Runtime Context Disclosure

Assessment Date:

May 9, 2026

Primary Impact Domain:

Sensitive Data Exposure

Secondary Impact Domains:

Credential Exposure, Intellectual Property Exposure, Cloud and Repository Access Risk, Developer Workflow Disruption, AI Infrastructure Trust, Regulatory and Customer Assurance Risk

Affected Asset Class:

Self-Hosted AI Model Servers / Ollama Infrastructure

Threat Objective Classification:

Exposure Exploitation / Runtime Context Access / Downstream Credential Enablement

BLUF

[EXP] Ollama AI Infrastructure Exposure Memory Disclosure Risk in Self-Hosted Model Servers creates material enterprise risk by turning exposed AI model infrastructure into a potential path for sensitive runtime data exposure. The risk is driven by vulnerable or unpatched Ollama services that are reachable from the internet, untrusted internal networks, shared AI lab environments, developer systems, or broadly accessible model-serving paths. The threat posture is elevated because Ollama environments may process sensitive prompts, source code, credentials, API tokens, environment variables, tool outputs, customer data, internal documentation, and automation context. Executive action is required to validate exposure, confirm patch state, restrict unsafe access, preserve AI infrastructure telemetry, review suspicious model-ingestion activity, assess model artifact handling, investigate unusual outbound communication, and determine whether exposed runtime context created downstream credential, data, or operational risk.

Executive Risk Translation

This threat shifts business risk from isolated AI service exposure to loss of confidence in self-hosted model infrastructure that may process sensitive enterprise context. The primary concern is not only whether an Ollama server was vulnerable, but whether exposed model-serving infrastructure allowed untrusted interaction with model-ingestion, artifact-handling, or model-transfer functionality before remediation. If suspicious activity occurred, response may expand into service containment, model artifact review, prompt and data exposure assessment, credential and API-token rotation, repository and cloud access review, developer workflow validation, outbound communication analysis, legal review, business-unit assurance, and executive incident governance. This creates financial, operational, data-protection, intellectual-property, security-governance, and reputational exposure beyond the initially affected model server.

S3 — Why This Matters Now

· Ollama exposure should be treated as an AI infrastructure risk, not as a routine developer-tool issue.

· Self-hosted model servers may process prompts, source code, credentials, API tokens, tool outputs, customer information, and internal operational context.

· Exposed or broadly reachable Ollama services create risk when untrusted sources can interact with model-ingestion, model-import, artifact-handling, or model-push functionality.

· Memory disclosure risk is materially different from ordinary service exposure because sensitive runtime context may be exposed without clear evidence of traditional host compromise.

· Patch status alone does not prove that exposed or vulnerable Ollama infrastructure was not accessed or abused before remediation.

· Historical review is required for Ollama services that were internet-facing, reachable from untrusted internal networks, deployed in shared AI labs, or connected to sensitive workflows before patch validation.

· Detection must focus on behavior-led correlation across exposure, model-ingestion activity, artifact changes, runtime instability, outbound communication, and downstream credential or identity activity.

· Exposure-only telemetry should support prioritization and hunting, but it must not be treated as confirmed data exposure without suspicious interaction or follow-on evidence.

· Organizations without reliable asset inventory, route-level logs, endpoint telemetry, model artifact monitoring, container visibility, DNS logs, egress monitoring, and identity or credential-management telemetry face elevated risk of delayed detection and incomplete scoping.

S4 — Key Judgments

· Ollama AI infrastructure exposure creates a high-priority data-exposure and runtime-context risk when vulnerable or unpatched model servers are reachable from untrusted paths.

· The primary business risk is unauthorized exposure of sensitive prompts, credentials, source code, customer data, tool outputs, internal documentation, or environment context processed by self-hosted model infrastructure.

· The strongest enterprise risk signal is exposed Ollama infrastructure receiving suspicious model-ingestion activity followed by unusual model artifact creation, runtime instability, outbound communication, model-push behavior, or downstream credential activity.

· Exposed or vulnerable Ollama services should drive urgent remediation and retrospective review, but exposure alone should not be treated as confirmed compromise.

· Model artifact activity is a high-value detection anchor because suspicious model ingestion may create new model files, artifact changes, manifest updates, or abnormal writes to model storage paths.

· Outbound communication after suspicious model activity is a high-priority escalation signal because it may indicate artifact transfer, data movement, attacker-directed communication, or post-exposure activity.

· Identity, cloud, repository, and credential-management telemetry are important because the most damaging outcome may occur after exposed secrets or runtime context are used elsewhere.

· Detection must remain behavior-led because model names, artifact names, payload details, source infrastructure, destination infrastructure, and public proof-of-concept details can change quickly.

· Network-only visibility is insufficient because it may not confirm model artifact creation, memory-related instability, local file activity, or downstream credential use.

· Executive risk reduction depends on exposed asset identification, patch validation, access restriction, telemetry preservation, model artifact review, egress analysis, credential rotation where warranted, and validated detection coverage across AI infrastructure, endpoint, container, network, identity, cloud, and repository telemetry.

S5 — Executive Risk Summary

Business Risk

Ollama AI infrastructure exposure can create material data-protection, intellectual-property, operational, and security-governance risk when self-hosted model servers process sensitive prompts, source code, customer data, credentials, API tokens, environment variables, tool outputs, internal documentation, or automation context. Risk increases when affected systems support developer workflows, coding assistants, AI labs, shared model infrastructure, CI systems, cloud workloads, customer-support workflows, regulated data handling, or internal automation connected to privileged tools.

Technical Cause

The risk is driven by vulnerable or unpatched Ollama services that are reachable from internet-facing, untrusted internal, broadly accessible, or poorly segmented network paths. The enterprise detection model should focus on exposed service access, suspicious model-ingestion or model-transfer functionality, abnormal model artifact creation, runtime instability, process crashes, unusual outbound communication, model-push behavior, and downstream credential or identity activity.

Threat Posture

The threat posture is elevated because successful abuse may expose sensitive runtime context without requiring conventional malware deployment, persistent access, or obvious host compromise. The risk is amplified when Ollama environments are connected to repositories, development systems, cloud accounts, secrets, customer workflows, automation tools, or internal APIs. Affected organizations may need to determine not only whether the model server was exposed, but whether sensitive prompts, credentials, source code, model artifacts, or tool outputs were accessible during the exposure window.

Executive Decision Requirement

Executives must require immediate validation of Ollama asset inventory, exposure state, patch status, access restrictions, model-ingestion history, artifact changes, runtime instability, outbound communication, linked credentials, repository access, cloud activity, and telemetry coverage. Response leadership should also confirm that vulnerable or exposed systems receive retrospective review and downstream credential-risk assessment rather than being closed solely on patch completion.

S6 — Executive Cost Summary

[EXP] Ollama AI Infrastructure Exposure Memory Disclosure Risk in Self-Hosted Model Servers creates financial exposure based on exposed-server count, exposure duration, patch latency, sensitive prompt and data scope, credential and token footprint, developer workflow dependency, model artifact activity, outbound communication, telemetry completeness, downstream credential use, repository or cloud integration risk, containment burden, and the degree to which exposed model infrastructure may have processed proprietary code, customer data, secrets, tool outputs, or regulated information.

Low Impact Scenario

Rapid assessment confirms that affected Ollama services were patched or mitigated quickly, were not reachable from untrusted networks, access was limited to approved administrative paths, logs are preserved, no suspicious model-ingestion activity is observed, no unusual model artifacts were created, no runtime instability occurred near suspicious access, no abnormal outbound communication is linked to the exposure, and no downstream credential, repository, cloud, or identity activity indicates misuse. Response still requires emergency inventory validation, patch confirmation, access restriction review, limited model artifact review, targeted credential exposure assessment, endpoint and network hunting, and executive tracking because sensitive AI infrastructure exposure existed; estimated impact $250K to $2M.

Moderate Impact Scenario

One or more Ollama servers were vulnerable, unpatched, internet-facing, broadly reachable, or accessible through untrusted internal paths during the exposure window, and investigation identifies suspicious model-ingestion activity, unusual model artifact handling, runtime instability, rare outbound communication, model-push behavior, incomplete telemetry, or limited downstream credential concern without confirmed large-scale data exposure, sustained adversary control, or broad cloud, repository, or customer-data impact. Response requires incident-response mobilization, service containment, model artifact review, prompt and data exposure assessment, targeted credential and API-token rotation, developer workflow validation, egress analysis, endpoint and container review, cloud and repository access review, detection tuning, legal assessment, executive coordination, and business-unit or customer communications readiness; estimated impact $3M to $15M.

High Impact Scenario

Confirmed or strongly suspected exposure affects Ollama infrastructure connected to sensitive prompts, proprietary source code, customer data, credentials, API tokens, environment variables, cloud services, repositories, CI systems, internal APIs, or shared AI workflows, with evidence of suspicious model-ingestion activity, abnormal artifact creation, runtime instability, unusual outbound communication, model-push behavior, credential use, repository access, cloud activity, or incomplete historical telemetry. Response may require broad service containment, AI workflow suspension, model artifact quarantine, credential and token rotation at scale, repository and cloud access review, customer-data impact assessment, developer environment validation, automation review, infrastructure rebuild, legal and regulatory review, cyber insurance engagement, customer assurance where applicable, and board-level incident governance; estimated impact $20M to $100M or higher.

S6A — Key Cost Drivers

· Number of affected Ollama servers, self-hosted AI model servers, shared AI lab systems, developer workstations, container hosts, and cloud-hosted model-serving workloads.

· Whether affected Ollama services were internet-facing, externally reachable, broadly reachable internally, or accessible from untrusted network paths before remediation.

· Duration of exposure before patch validation, access restriction, service isolation, or compensating control deployment.

· Whether the affected environment processed proprietary source code, customer data, credentials, API tokens, secrets, tool outputs, regulated information, or sensitive internal prompts.

· Whether suspicious model-ingestion, model import, artifact handling, model-push, or model-transfer behavior occurred before remediation.

· Whether suspicious activity was followed by new model artifacts, model storage changes, unusually large model files, temporary model components, or writes outside approved model paths.

· Whether suspicious access was followed by Ollama crashes, process restarts, abnormal memory pressure, model-loading errors, service instability, container restarts, or runtime anomalies.

· Whether outbound communication occurred to newly observed, rare, unapproved, cloud-storage, file-sharing, anonymous-hosting, unknown model-registry, or low-prevalence destinations.

· Scope of credentials, API tokens, repository access tokens, cloud credentials, service-account keys, environment variables, secrets, or tool outputs potentially present in the Ollama runtime environment.

· Whether affected systems were connected to coding assistants, CI systems, cloud accounts, internal APIs, customer-support workflows, developer repositories, or automation tools.

· Availability and retention of reverse proxy logs, ingress logs, application logs, endpoint telemetry, file telemetry, container logs, DNS logs, firewall logs, NDR telemetry, egress records, cloud audit logs, identity logs, and credential-management activity.

· Ability to map Ollama hosts to owners, users, service accounts, repositories, cloud roles, deployment environments, approved model sources, and approved outbound destinations.

· Need for credential rotation, API-token replacement, cloud-key review, repository access review, secrets-management validation, model artifact review, prompt exposure assessment, or customer-data scoping.

· Degree to which incomplete telemetry forces broader containment, credential rotation, model artifact quarantine, developer workflow suspension, or executive incident governance.

· Need for legal review, regulatory assessment, customer assurance, contractual notification analysis, insurance reporting, workforce communications, or board-level oversight.

Most Likely Scenario Justification

Moderate scenario is most likely when exposed or vulnerable Ollama infrastructure requires historical compromise review and sensitive runtime context assessment, but available evidence does not confirm large-scale data exposure, sustained adversary control, broad credential misuse, repository compromise, cloud compromise, or customer-impacting disclosure. The estimate moves toward the lower end when telemetry confirms rapid remediation, short exposure duration, no suspicious model-ingestion activity, no unusual artifact creation, no runtime instability, no abnormal egress, no sensitive workflow connection, and no downstream credential activity. The estimate moves toward the upper end when affected systems are internet-facing, process sensitive prompts or source code, connect to cloud services or internal automation, have incomplete telemetry, show suspicious model activity, include unusual artifact handling, require credential rotation, or trigger repository, cloud, customer-data, or regulated-data review.

S6B — Compliance and Risk Context

Compliance Exposure Indicator

Moderate to High depending on whether sensitive prompts, customer data, regulated information, source code, credentials, API tokens, secrets, tool outputs, repository content, cloud access, downstream identity activity, customer-impact uncertainty, or incomplete forensic scoping affected systems subject to regulatory, contractual, privacy, customer, insurance, intellectual-property, or material business obligations.

Risk Register Entry

Risk Title

Ollama AI Infrastructure Exposure and Sensitive Runtime Context Disclosure Risk

Risk Description

Adversaries may access or abuse exposed, vulnerable, or unpatched Ollama model-serving infrastructure to interact with model-ingestion or model-transfer functionality, trigger abnormal model artifact handling, expose sensitive runtime context, communicate externally from AI infrastructure, or enable downstream use of credentials, API tokens, source code, prompts, customer data, environment variables, tool outputs, repositories, cloud services, or internal automation paths.

Likelihood

High.

Impact

Severe.

Risk Rating

Critical.

Annualized Risk Exposure

Estimated $5M to $35M or higher based on exposed Ollama server count, untrusted reachability, exposure duration, patch latency, sensitive prompt and data scope, credential and token footprint, developer workflow dependency, cloud or repository integration, model artifact activity, outbound communication, telemetry completeness, containment complexity, downstream credential risk, customer assurance requirements, and legal or regulatory obligations.

S7 — Risk Drivers

· Exposed or broadly reachable Ollama services create direct access paths to self-hosted AI model infrastructure.

· Vulnerable or unpatched model servers increase the likelihood that suspicious model-ingestion behavior can create sensitive runtime data exposure.

· Ollama environments may process prompts, source code, credentials, API tokens, environment variables, tool outputs, customer data, and internal operational context.

· AI lab systems and developer-hosted model servers may have weaker access controls, incomplete logging, temporary deployment patterns, or unclear ownership.

· Shared model infrastructure can expand blast-radius risk when multiple users, teams, workflows, or automation paths depend on the same model-serving environment.

· Model-ingestion, model import, artifact creation, and model-push behavior create durable detection anchors but may also overlap with legitimate AI experimentation.

· Runtime instability after suspicious model-ingestion activity may indicate abnormal model handling even when direct memory visibility is unavailable.

· Unusual outbound communication after model activity may indicate artifact transfer, data movement, attacker-directed communication, or follow-on activity.

· Credential or token exposure can force rotation across cloud accounts, repositories, CI systems, internal APIs, service accounts, developer tools, and secrets-management platforms.

· Patch validation does not prove exposed systems were uncompromised before remediation.

· Missing asset inventory, route-level logs, endpoint telemetry, file telemetry, container logs, DNS records, egress telemetry, identity logs, or credential-management records can materially weaken scoping.

· Legitimate model experimentation, model pulls, model imports, automation, patching, scanning, monitoring, and developer testing can resemble suspicious behavior without strong ownership and baseline context.

· Over-reliance on static exploit strings, public proof-of-concept artifacts, file names, model names, attacker infrastructure, or fixed payload indicators can miss evolved activity and post-exposure behavior.

S8 — Bottom Line for Executives

[EXP] Ollama AI Infrastructure Exposure Memory Disclosure Risk in Self-Hosted Model Servers should be treated as a high-priority AI infrastructure and sensitive runtime context risk because exposed model-serving environments may process prompts, source code, secrets, customer data, tool outputs, and credentials. The key executive concern is not only whether affected Ollama services were patched, but whether exposed systems received suspicious model-ingestion activity or generated follow-on evidence of abnormal artifact handling, outbound communication, runtime instability, or downstream credential use before remediation. Risk reduction depends on exposed asset scoping, patch validation, access restriction, log preservation, model artifact review, egress analysis, prompt and data exposure assessment, credential review, downstream cloud and repository hunting, and behavior-based detection coverage. Organizations should prioritize this report as an AI infrastructure trust issue because successful exposure can create data-protection uncertainty, intellectual-property risk, credential risk, developer workflow disruption, customer assurance pressure, regulatory uncertainty, and board-level incident governance requirements.

S9 — Board-Level Takeaway

Ollama exposure turns self-hosted AI model infrastructure into a potential sensitive-data and credential-risk surface. The board-level concern is that attackers may target the same systems used to process prompts, source code, credentials, tool outputs, customer data, and internal automation context. Leadership should require evidence that affected Ollama infrastructure has been identified, unsafe exposure has been removed or restricted, patch state has been validated, historical activity has been reviewed, model artifacts have been assessed, outbound communication has been analyzed, credentials and tokens have been evaluated, and downstream repository, cloud, identity, and secrets-management activity can be scoped with confidence. This report supports governance decisions around AI infrastructure security, sensitive data handling, exposure management, credential containment, telemetry readiness, incident-response escalation, regulatory posture, and executive oversight of self-hosted model-server risk

Figure 2

S10 — Threat Overview

Ollama AI Infrastructure Exposure Memory Disclosure Risk in Self-Hosted Model Servers concerns exposed or vulnerable self-hosted AI model infrastructure that may process sensitive enterprise runtime context. The core risk is that an Ollama service reachable from the internet, untrusted internal networks, shared AI labs, developer environments, or broadly accessible model-serving paths may receive suspicious model-ingestion or artifact-handling activity before remediation.

This exposure should be treated as an AI infrastructure risk rather than a standard web application issue. Ollama environments may process prompts, source code, credentials, API tokens, environment variables, tool outputs, internal documentation, customer data, and automation context. If vulnerable infrastructure is reachable from untrusted paths, the business concern is not limited to service exposure. The concern is whether sensitive runtime context, model artifacts, credentials, or downstream access paths were exposed, transferred, or later abused.

The highest-value threat model is behavior-led. Defenders should focus on exposed service reachability, suspicious model-ingestion activity, abnormal model artifact handling, runtime instability, unusual outbound communication, and downstream credential or identity activity. Exposure alone should not be treated as confirmed compromise. Suspicious model activity, artifact changes, egress, instability, or post-exposure credential use materially increases confidence and response priority.

S11 — Threat Classification and Type

Threat Type

AI infrastructure exposure and sensitive runtime context disclosure risk.

Threat Sub-Type

Self-hosted model server exposure with suspicious model-ingestion, artifact-handling, outbound communication, and downstream credential-risk potential.

Operational Classification

Exposed AI model-serving infrastructure with potential data-exposure, credential-exposure, developer-workflow, and downstream access implications.

Primary Function

The primary function of the threat is to create risk that sensitive runtime context processed by Ollama infrastructure may be exposed through vulnerable or untrusted interaction with the model-serving environment. The most material enterprise concern is unauthorized access to prompts, source code, credentials, tokens, model artifacts, tool outputs, customer data, or automation context associated with self-hosted AI workflows.

S12 — Campaign or Activity Overview

This report does not require a named adversary campaign to establish material risk. The relevant activity pattern is exposure of vulnerable or unpatched Ollama model-serving infrastructure to untrusted access paths, followed by suspicious interaction with model-ingestion, model-import, model artifact, or model-transfer functionality.

Observed or suspected activity should be evaluated through a sequence-based model. The most relevant sequence begins with exposed Ollama service reachability, progresses to suspicious model-ingestion or artifact-handling behavior, and becomes more serious when paired with new model artifacts, runtime instability, unusual outbound communication, model-push behavior, or downstream credential and identity anomalies.

The exposure is most concerning in environments where Ollama is connected to developer repositories, coding assistants, AI labs, CI systems, cloud services, internal APIs, secrets, customer workflows, or automation tools. In those environments, memory disclosure or sensitive runtime exposure can create downstream risk even when there is no evidence of traditional malware deployment, persistence, or confirmed host compromise.

This activity should be investigated as a potential data-exposure and credential-risk event when vulnerable Ollama infrastructure was reachable from untrusted networks or when suspicious model lifecycle behavior occurred during the exposure window.

S13 — Targets and Exposure Surface

Primary targets are self-hosted Ollama services and related AI model-serving environments that are vulnerable, unpatched, exposed, broadly reachable, or insufficiently segmented.

Relevant exposure surfaces include:

· Internet-facing Ollama services.

· Ollama services reachable from untrusted internal networks.

· Shared AI lab servers.

· Developer workstations running Ollama with broad network reach.

· Containerized Ollama deployments.

· Cloud-hosted model-serving workloads.

· Internal shared model infrastructure.

· CI or automation environments connected to Ollama.

· Model-serving systems connected to coding assistants, repositories, internal APIs, or cloud services.

· Ollama hosts that process prompts, source code, credentials, API tokens, tool outputs, customer data, or sensitive internal context.

The highest-risk exposure occurs when model-serving infrastructure is both reachable from untrusted paths and connected to sensitive workflows. Developer convenience deployments, AI labs, proof-of-concept servers, unmanaged containers, and temporary cloud instances may create elevated risk because they can combine sensitive context with weaker logging, weaker access control, unclear ownership, and incomplete monitoring.

S14 — Sectors / Countries Affected

Sectors Affected

· Technology, software development, AI engineering, and cloud-hosted enterprises.

· Financial services and banking.

· Healthcare and life sciences.

· Manufacturing and industrial operations.

· Energy, utilities, and critical infrastructure.

· Retail, ecommerce, and customer-support environments.

· Education, research institutions, and AI labs.

· Government and public-sector organizations.

· Telecommunications and managed service providers.

· Enterprise IT operations, security operations, and organizations running self-hosted AI infrastructure.

· Organizations using Ollama or comparable self-hosted model-serving environments to process source code, prompts, customer data, internal documentation, tool outputs, credentials, API tokens, cloud context, or automation workflows.

Countries Affected

· Global.

· Exposure is not limited to a single country or region because self-hosted Ollama deployments can exist anywhere organizations operate developer environments, AI labs, cloud workloads, research systems, shared model infrastructure, or internal automation.

· Countries with large technology sectors, cloud adoption, regulated industries, research institutions, software-development ecosystems, government AI adoption, or high-value enterprise data may face elevated operational exposure.

· Country-specific impact should be assessed by Ollama deployment presence, internet or untrusted-network reachability, patch state, sensitive workflow connection, telemetry quality, exposure duration, and incident-response readiness rather than geography alone.

S15 — Adversary Capability Profiling

Capability Level

Moderate to High.

Technical Sophistication

Technical sophistication is assessed as moderate to high because the most relevant activity requires identifying exposed AI model-serving infrastructure, interacting with model-ingestion or artifact-handling functionality, and potentially using exposed runtime context for downstream access. The activity does not require highly customized malware in every case, but it does require understanding AI infrastructure behavior, model lifecycle operations, exposed service paths, and the value of prompts, credentials, source code, and tool outputs.

Infrastructure Maturity

Infrastructure maturity may range from opportunistic scanning infrastructure to organized infrastructure used for staged access, model artifact transfer, outbound communication, or downstream credential use. Mature operators may rotate source infrastructure, vary model names, change artifact names, use cloud-hosted destinations, and avoid static indicators.

Operational Scale

Operational scale can range from opportunistic discovery of exposed Ollama services to targeted activity against organizations with valuable AI workflows, source code, customer data, cloud integrations, or developer automation. Scale increases when exposed services are discoverable, unpatched, unauthenticated, or deployed in shared lab and developer environments.

Escalation Likelihood

Escalation likelihood is moderate when exposed Ollama infrastructure is isolated, patched quickly, and not connected to sensitive workflows. Escalation likelihood becomes high when the service is internet-facing, unpatched, connected to repositories or cloud services, processing sensitive prompts or credentials, showing suspicious model-ingestion behavior, creating unusual artifacts, communicating with unfamiliar destinations, or followed by downstream credential activity.

S16 — Targeting Probability Assessment

Overall Targeting Probability

High for exposed, vulnerable, internet-facing, or broadly reachable Ollama infrastructure. Moderate for internal-only deployments with weak segmentation, shared access, sensitive workflow connections, or incomplete telemetry. Lower for patched, isolated, local-only deployments with strong access controls, no sensitive workflow connection, and reliable telemetry.

Targeting Drivers

· Internet-facing or broadly reachable Ollama services.

· Vulnerable or unpatched model-serving infrastructure.

· Weak authentication, access restriction, or segmentation around AI infrastructure.

· Developer, AI lab, or proof-of-concept deployments with unclear ownership.

· Ollama environments connected to source code, repositories, cloud services, internal APIs, CI systems, or automation tools.

· Runtime context that may include prompts, credentials, API tokens, secrets, tool outputs, or customer data.

· Incomplete logging, incomplete endpoint coverage, limited egress visibility, or weak model artifact monitoring.

· Public discoverability of exposed services and reusable model-serving access patterns.

Most Likely Targets

· Internet-facing Ollama services.

· Shared AI lab servers.

· Developer workstations or servers running Ollama with broad network reach.

· Cloud-hosted self-hosted model-serving workloads.

· Containerized Ollama deployments with exposed ports or weak ingress controls.

· Internal shared model infrastructure used by multiple teams.

· AI systems connected to coding assistants, repositories, CI systems, internal APIs, or cloud services.

· Organizations using self-hosted model infrastructure for sensitive prompts, proprietary code, customer data, or automation workflows.

S17 — MITRE ATT&CK Chain Flow Mapping

Stage 1 — Exposure Identification and Target Selection

Adversaries identify Ollama services reachable from the internet, untrusted internal networks, shared AI lab environments, cloud workloads, or broadly accessible model-serving paths. This stage may involve internet-scale discovery, targeted probing, or validation of access to exposed AI model infrastructure.

MITRE ATT&CK Mapping

T1595 — Active Scanning

Stage 2 — Initial Access Through Exposed Model Server

Adversaries interact with exposed Ollama service functionality and attempt to exploit or abuse vulnerable model-serving infrastructure. The core access path is exploitation or abuse of a public-facing or untrusted-reachable application service.

MITRE ATT&CK Mapping

T1190 — Exploit Public-Facing Application

Stage 3 — Suspicious Model Lifecycle Interaction

Adversaries may interact with model-ingestion, model-import, model artifact, blob, or model-push functionality. This stage is the key activity anchor for the report and should be assessed through route-level logs, proxy logs, endpoint file activity, model storage changes, and container telemetry where available.

MITRE ATT&CK Mapping

T1105 — Ingress Tool Transfer, conditional where model artifacts, files, or external content are transferred into the model-serving environment

Stage 4 — Conditional Sensitive Data or Credential Exposure

If vulnerable runtime context is exposed, adversaries may obtain sensitive prompts, credentials, API tokens, environment variables, source code, customer data, tool outputs, or other material processed by the Ollama environment. This stage should remain evidence-driven and should not be assumed without supporting telemetry, artifact review, credential activity, or incident-response findings.

MITRE ATT&CK Mapping

T1552 — Unsecured Credentials, conditional where exposed tokens, secrets, environment variables, or credentials are identified

Stage 5 — Conditional Outbound Transfer or External Communication

If suspicious model activity is followed by outbound communication, adversaries may transfer model artifacts, exposed runtime data, or related content through unfamiliar external infrastructure. This stage should require linkage to the Ollama host, container, model activity window, or related outbound destination. It should not be assumed from egress alone.

MITRE ATT&CK Mapping

T1567 — Exfiltration Over Web Service, conditional where outbound transfer uses cloud storage, file-sharing, web-service, or other externally hosted destinations.

T1041 — Exfiltration Over C2 Channel, conditional where outbound transfer is linked to suspected adversary-controlled communication rather than approved model registries, update services, telemetry, backups, monitoring, or container registry activity.

Stage 6 — Conditional Downstream Credential or Cloud Use

If credentials, API tokens, repository tokens, cloud keys, or service-account material were exposed, adversaries may attempt downstream access to cloud services, repositories, internal APIs, or automation platforms. This stage should require identity, repository, cloud, credential-management, or audit-log evidence.

MITRE ATT&CK Mapping

T1078 — Valid Accounts, conditional where exposed or stolen credentials are used for downstream access

S18 — Attack Path Narrative (Signal-Aligned Execution Flow)

Initial Exposure

The attack path begins when an Ollama model server is exposed to the internet, an untrusted internal network, a shared AI lab environment, a cloud workload, a container ingress path, or a developer environment with broad network reach. This exposure may occur through public IP association, permissive firewall rules, weak ingress controls, unmanaged lab infrastructure, temporary cloud deployment, or developer convenience configuration.

At this stage, the primary signal is exposure rather than confirmed compromise. The required defensive action is to validate whether the Ollama service is reachable from untrusted sources, whether the deployment is vulnerable or unpatched, and whether the service processes sensitive prompts, code, credentials, tool outputs, customer data, or automation context.

Model-Service Interaction

Adversaries interact with the exposed Ollama service from internet-originating, unfamiliar, untrusted, or otherwise unapproved infrastructure. This activity may appear as targeted probing, repeated access attempts, interaction with model-related API functionality, abnormal request timing, large request bodies, or access inconsistent with approved administrative or model-management workflows.

The primary signal is suspicious interaction with an exposed model-serving service. This should support investigation or exploit-attempt classification, but it should not be treated as confirmed data exposure without supporting artifact, runtime, egress, credential, or incident-response evidence.

Model Lifecycle Activity

If the exposed service is vulnerable or improperly controlled, attacker interaction may involve model ingestion, model import, artifact handling, blob activity, or model-push behavior. This is the key behavioral stage for the report because it connects untrusted access to model lifecycle functionality rather than ordinary service reachability.

The primary signals at this stage are suspicious model-ingestion activity, new or unusual model artifacts, model storage changes, manifest updates, temporary model components, unusual model file sizes, or writes outside approved model paths. These signals become more meaningful when they occur on internet-facing, unpatched, shared, or sensitive Ollama infrastructure.

Runtime and Artifact Anomalies

Suspicious model lifecycle activity may be followed by runtime instability or abnormal host, container, or storage behavior. Observable activity may include Ollama crashes, process restarts, container restarts, abnormal memory pressure, model-loading errors, service instability, or model artifact changes that do not align with approved model-management activity.

The primary signal is a time-linked relationship between suspicious model-service interaction and abnormal runtime or artifact behavior. Instability alone is not sufficient for confirmed compromise, but instability following untrusted model-ingestion activity should be treated as a high-priority investigation lead.

Outbound Communication

The attack path becomes more serious when suspicious model activity is followed by outbound communication from the Ollama host or container. Outbound behavior may include communication with newly observed, rare, unapproved, cloud-storage, file-sharing, anonymous-hosting, or unknown model-registry destinations.

The primary signal is a same-host or same-container relationship between suspicious model lifecycle activity and unusual outbound communication. This requires careful distinction between approved model pulls, approved model pushes, patching, telemetry, backups, monitoring, container registry activity, and unfamiliar destinations outside the approved model-source or egress baseline.

Conditional Downstream Credential or Data Use

If sensitive runtime context is exposed, downstream activity may appear in identity, cloud, repository, CI, internal API, secrets-management, or credential-management telemetry. Relevant activity may include unusual API-token use, service-account activity from unfamiliar sources, repository access anomalies, cloud API calls, secret reads, new access keys, or use of credentials associated with the Ollama environment.

This stage is conditional and should not be assumed without defensible linkage to the exposed model server, the exposure window, suspicious model lifecycle activity, or affected credentials and identities.

Compromise Classification

Exposure should be classified as exposed.

Suspicious access to an exposed Ollama service without meaningful model lifecycle activity should be classified as targeted or attempted interaction.

Suspicious access followed by model-ingestion activity, unusual model artifacts, runtime instability, abnormal storage behavior, or unfamiliar outbound communication should be classified as probable exploitation or probable suspicious use.

Confirmed compromise or confirmed data exposure should require supporting artifact review, endpoint evidence, application evidence, network evidence, credential-use evidence, cloud or repository evidence, or validated incident-response findings.

S19 — Attack Chain Risk Amplification Summary

AI Infrastructure Sensitivity

Risk is amplified because the exposed asset is an AI model-serving environment rather than a generic development service. Ollama infrastructure may process prompts, source code, credentials, API tokens, customer data, tool outputs, internal documentation, and automation context.

Runtime Context Exposure

Risk increases when defenders cannot determine what sensitive data, prompts, secrets, or workflow context existed in memory or runtime context during the exposure window. Even limited uncertainty can force credential review, prompt and data assessment, repository review, and downstream access validation.

Model Lifecycle Abuse

Risk increases when suspicious access is followed by model ingestion, artifact handling, model import, blob activity, model-push behavior, or abnormal model storage changes. These behaviors connect exposure to activity that is directly relevant to the Ollama risk scenario.

Artifact and Runtime Instability

Risk increases when suspicious model activity is followed by new model files, unusual artifacts, manifest changes, temporary components, Ollama crashes, process restarts, container restarts, memory pressure, or model-loading errors. These signals can indicate abnormal model handling even when direct memory visibility is unavailable.

Outbound Movement

Risk increases when the Ollama host or container communicates with newly observed, rare, unexplained, unapproved, cloud-storage, file-sharing, anonymous-hosting, or unknown model-registry destinations. This behavior may indicate artifact transfer, exposed data movement, or attacker-directed communication.

Downstream Credential Exposure

Risk increases when exposed runtime context may include API tokens, repository tokens, cloud keys, service-account material, environment variables, or automation secrets. Downstream credential use can convert an AI infrastructure exposure into a broader cloud, repository, identity, or internal API investigation.

Detection Confidence Dependency

Risk increases when asset inventory, route-level logs, endpoint telemetry, file telemetry, container logs, DNS records, egress telemetry, identity logs, repository logs, cloud audit logs, or credential-management records are missing or inconsistently retained.

Operational Ambiguity

Risk increases when legitimate model experimentation, model pulls, model imports, developer testing, AI platform automation, backups, patching, monitoring, or vulnerability scanning overlap with suspicious activity. Weak ownership records and incomplete baselines can make it difficult to separate approved model-management behavior from attacker-driven interaction.

Response Complexity

Risk increases when affected Ollama infrastructure supports developer workflows, coding assistants, AI labs, CI systems, customer-support workflows, cloud services, repositories, internal APIs, or shared model infrastructure. Containment may require service isolation, workflow suspension, credential rotation, model artifact review, repository review, and executive governance.

S20 — Tactics, Techniques, and Procedures

Figure 3

Exposure Targeting and Model-Service Discovery

· Attackers target internet-facing or untrusted-accessible Ollama services, shared AI lab servers, cloud-hosted model-serving workloads, containerized deployments, and developer environments with broad network reach.

· Attackers may use scanning, probing, low-volume validation, repeated service interaction, or access from unfamiliar infrastructure to identify exposed model-serving systems.

· Scan-only or exposure-only activity should remain classified as exposure or attempted interaction unless correlated with suspicious model lifecycle activity, artifact changes, runtime instability, outbound communication, or downstream credential use.

Interaction with Exposed Model-Serving Functionality

· Attackers interact with public-facing or untrusted-reachable Ollama functionality on vulnerable, unpatched, or weakly controlled deployments.

· Suspicious behavior may include interaction with model-related API functionality, abnormal request timing, repeated model-management attempts, large request bodies, or activity inconsistent with approved administrative sources.

· Interaction becomes higher confidence when it occurs against internet-facing, unpatched, shared, sensitive, or poorly segmented Ollama infrastructure.

Model Lifecycle Abuse

· Attackers may attempt to use or abuse model-ingestion, model-import, artifact-handling, blob, or model-push functionality.

· Observable procedures may include new model artifacts, abnormal model storage changes, manifest updates, temporary model components, unusual model file sizes, or writes outside approved model paths.

· Legitimate model operations can resemble suspicious activity, so validation must account for approved model pulls, imports, refreshes, backups, developer testing, AI platform automation, and documented maintenance activity.

Runtime and Artifact Anomalies

· Suspicious model activity may be followed by Ollama crashes, process restarts, container restarts, memory pressure, service instability, or model-loading errors.

· Runtime instability near suspicious model-ingestion activity should increase severity because it may indicate abnormal model handling or exploit-adjacent behavior.

· Instability should be evaluated against approved upgrades, service restarts, container redeployments, resource exhaustion, lab testing, and known unstable development workflows.

Outbound Communication and Artifact Transfer

· Attackers may cause or use outbound communication from the Ollama host or container after suspicious model activity.

· Observable procedures may include connections to newly observed, rare, unexplained, unapproved, cloud-storage, file-sharing, anonymous-hosting, or unknown model-registry destinations.

· Outbound communication should be evaluated against approved model registries, update services, cloud destinations, automation systems, backups, monitoring, telemetry, and container registry activity.

Conditional Downstream Credential Use

· If exposed runtime context includes credentials, API tokens, repository tokens, cloud keys, service-account material, or automation secrets, attackers may attempt downstream access outside the Ollama environment.

· Observable procedures may include unusual repository access, cloud API calls from unfamiliar infrastructure, rare service-account use, secret reads, new access keys, or API-token use after the exposure window.

· Downstream credential activity should be treated as conditional unless it is temporally and logically linked to exposed Ollama infrastructure, suspicious model activity, or affected credentials.

Evasion and Blending Behavior

· Attackers may blend with legitimate AI infrastructure activity by using low-volume access, delayed follow-on behavior, cloud-hosted infrastructure, ordinary-looking model operations, or artifact names that resemble normal model workflows.

· Attackers may vary source infrastructure, destination infrastructure, model names, artifact names, request timing, transfer size, and delivery patterns.

· Detection should remain behavior-led because exploit strings, public proof-of-concept artifacts, model names, file names, source IPs, and destination indicators can change quickly.

S20A — Adversary Tradecraft Summary

The adversary tradecraft in this report is best understood as exposure of self-hosted AI model infrastructure with potential sensitive runtime context consequences. The governing behavior is not phishing delivery, ransomware execution, destructive malware, or a guaranteed persistence playbook. The governing behavior is suspicious interaction with exposed Ollama model-serving functionality that may be followed by model lifecycle activity, artifact anomalies, runtime instability, outbound communication, or downstream credential use.

Attackers can vary source infrastructure, request timing, model names, artifact names, transfer size, destination infrastructure, and visible interaction patterns. The tradecraft should therefore be assessed through the relationship between exposed service access and subsequent model-serving behavior, including model ingestion, artifact changes, runtime anomalies, outbound communication, and credential or identity activity.

Operationally, exposed Ollama infrastructure requires urgent validation and hunting. Exposure indicates risk. Suspicious service interaction may indicate attempted exploitation or abuse. Suspicious model lifecycle activity followed by artifact changes, runtime instability, unusual outbound communication, or downstream credential use supports probable exploitation or probable suspicious use. Confirmed compromise requires stronger host, application, artifact, identity, cloud, repository, credential-management, or incident-response evidence.

The highest-confidence tradecraft signals are suspicious access to exposed Ollama infrastructure followed by one or more of the following: model-ingestion activity, abnormal model artifact creation, unexpected model storage changes, runtime instability, process or container restart behavior, unfamiliar outbound communication, model-push behavior, large egress, or downstream use of credentials, API tokens, repository tokens, cloud keys, or service accounts.

Tradecraft should be assessed conservatively. No actor identity, malware family, destructive objective, persistence mechanism, lateral-movement path, payload signature, or complete post-exploitation playbook should be asserted unless validated by vendor guidance, government reporting, forensic review, or incident-response evidence.

S21 — Detection Strategy Overview

Detection Philosophy

Detection for this exposure should treat Ollama as an AI infrastructure service, not as a standard web application or isolated developer utility. The primary detection objective is to identify when a self-hosted model server is reachable from an untrusted network path and receives behavior consistent with suspicious model-ingestion activity.

The highest-value detection approach is behavior-led correlation. Detection should not depend on a single static exploit string, model name, file name, or public proof-of-concept artifact. The defensive focus should be on the sequence of exposed service access, model-ingestion activity, abnormal model artifact handling, and possible outbound movement following model ingestion.

This issue should be handled as a potential data-exposure event when vulnerable Ollama infrastructure was reachable from the internet, exposed to untrusted internal networks, or connected to workflows that process secrets, prompts, source code, customer data, or tool outputs.

Primary Detection Anchors

Ollama service exposure on internet-facing, untrusted, or broadly reachable network interfaces.

Unexpected access to Ollama API functionality associated with model ingestion, model import, model artifact handling, or model push behavior.

Inbound requests from unfamiliar external infrastructure, scanning sources, anonymous hosting providers, or previously unseen internal systems.

Model-ingestion activity or model artifact modification outside approved administrative activity.

New or unusual GGUF or model-related artifacts written to disk by the Ollama process.

Ollama activity followed by unusual outbound traffic, model push behavior, large egress, or communication with unfamiliar destinations.

Process instability, crash behavior, abnormal memory pressure, or runtime anomalies occurring near suspicious model-ingestion activity.

Confirmed presence of unpatched Ollama infrastructure.

Detection Prioritization Model

Priority 1 detection should focus on vulnerable or potentially vulnerable Ollama services exposed to untrusted access and receiving requests associated with model ingestion.

Priority 2 detection should focus on model-ingestion activity followed by outbound communication, model push behavior, unexpected artifact transfer, or large data movement.

Priority 3 detection should focus on host-level and container-level evidence, including new model files, unexpected GGUF handling, abnormal writes to model storage paths, process crashes, or Ollama runtime instability.

Priority 4 detection should focus on compensating evidence where direct application telemetry is limited. This includes firewall logs, reverse proxy logs, endpoint telemetry, container runtime logs, DNS telemetry, ingress logs, and egress monitoring.

Single-source alerts should be treated as triage leads unless they contain strong evidence of exposed vulnerable service access and suspicious model lifecycle behavior. Confirmed escalation should require correlation across network, application, endpoint, container, or egress telemetry.

Correlation Strategy

Detection should require a sequence-based view of the activity rather than isolated event matching.

Minimum suspicious correlation should include an Ollama service reachable from an untrusted path, followed by access to model-ingestion functionality, followed by at least one supporting signal such as unusual model artifact creation, outbound transfer, unfamiliar destination communication, service instability, or suspicious post-event credential activity.

High-confidence escalation should require a stronger combination of signals. An unpatched Ollama service, untrusted access to model-ingestion functionality, new or abnormal model artifact creation, outbound communication to an unfamiliar destination, and downstream evidence of possible secret or token use should be treated as a severe incident.

Exposure alone should not be classified as confirmed exploitation. Exposure establishes risk. Suspicious model-ingestion activity establishes probable attack activity. Correlated outbound movement, artifact transfer, memory-related instability, or downstream credential use strengthens the case for confirmed or likely compromise.

Telemetry Prioritization

Network telemetry should be used to identify exposed Ollama services, inbound access sources, unusual request patterns, and outbound movement following model activity.

Reverse proxy, API gateway, ingress, and load balancer telemetry should be prioritized where Ollama is deployed behind infrastructure that can provide route-level visibility.

Endpoint telemetry should be used to confirm Ollama process behavior, model artifact creation, file-system writes, crash behavior, runtime anomalies, and unusual process or network activity.

Container telemetry should be used where Ollama runs in Docker, Kubernetes, developer labs, AI experimentation environments, CI systems, or ephemeral compute environments.

Identity, secrets, and cloud audit telemetry should be reviewed when suspicious exposure is confirmed, because the material risk may occur after memory disclosure through use of exposed credentials, tokens, prompts, or environment variables.

Detection Design Constraints

Detection logic should not assume that all Ollama usage is malicious. Local developer use, approved model experimentation, internal automation, and administrative model management may be legitimate.

Detection logic should not rely on static exploit names, public proof-of-concept strings, specific file names, or fixed model labels. Those indicators are fragile and likely to change.

Detection logic should not classify every exposed Ollama service as compromised. Public or broad exposure is a critical risk condition, but confirmed compromise requires suspicious interaction or follow-on evidence.

Detection logic should account for partial telemetry. Many self-hosted AI deployments may lack complete application logs, centralized endpoint telemetry, or consistent reverse proxy coverage.

Detection logic should distinguish between approved model pulls, approved model imports, normal local model management, and suspicious model-ingestion or push behavior involving unfamiliar sources, destinations, timing, or hosts.

Baseline and Deployment Requirements

Organizations should maintain an inventory of approved Ollama hosts, listening interfaces, exposed ports, owning teams, deployment environments, and expected access paths.

Baseline should include approved administrative sources, approved model registries, approved model storage paths, expected model artifact sizes, normal model-ingestion frequency, and expected outbound destinations.

High-priority monitoring should cover internet-facing deployments, lab environments, developer workstations, AI experimentation servers, internal shared model infrastructure, and systems connected to coding assistants or agentic workflows.

Where native application telemetry is incomplete, organizations should deploy compensating visibility through reverse proxies, endpoint telemetry, container logs, firewall logs, DNS monitoring, and egress controls.

Variant Resilience Requirements

Detections should remain effective when an attacker changes model names, artifact names, request timing, source infrastructure, destination infrastructure, payload size, or delivery pattern.

Detection should focus on the durable behavior pattern: exposed AI model service, untrusted interaction, model-ingestion activity, abnormal artifact handling, and suspicious outbound or post-exposure activity.

Detection should support both internet-exposed and internally exposed scenarios. Internal exposure remains material when untrusted users, compromised endpoints, contractor systems, lab networks, or lateral movement paths can reach the model server.

Detection should also generalize beyond this single issue. The broader defensive pattern is exposed AI model ingestion combined with sensitive process-memory risk.

Operational Detection Model

SOC triage should first determine whether the Ollama instance was unpatched, exposed to untrusted access, or reachable from a broad internal network segment.

The second triage step should determine whether model ingestion, model import, blob upload, model export, or model push behavior occurred during the exposure window.

The third triage step should review host, container, and storage telemetry for new model artifacts, abnormal file writes, runtime instability, or suspicious process behavior.

The fourth triage step should review outbound network traffic, DNS activity, cloud audit logs, identity logs, and secrets-management telemetry for signs that exposed memory contents may have enabled follow-on access.

Confirmed or strongly suspected exposure should trigger service containment, upgrade validation, exposure removal, secret rotation, prompt and data review, model artifact review, and downstream credential-abuse hunting.

S22 — Primary Detection Signals

Primary Detection Signals

Primary detection should focus on behavior indicating that an exposed Ollama service is receiving suspicious model-ingestion activity from an untrusted source.

The strongest primary signal is untrusted access to Ollama API functionality associated with model ingestion, model artifact handling, model import, or model push behavior. This signal becomes materially stronger when the source is external, newly observed, associated with scanning activity, or inconsistent with normal administrative access patterns.

Model-ingestion activity against an Ollama instance that is internet-facing, broadly reachable, or exposed to untrusted internal networks should be treated as a high-priority investigation. Exposure alone is a risk condition, but exposure combined with suspicious model-ingestion behavior creates a stronger detection signal.

New or unusual model artifacts written by the Ollama process are also primary signals. This includes model files, GGUF-related artifacts, temporary model components, or storage-path changes that occur outside normal administrative activity.

Ollama activity followed by outbound model push behavior, large egress, or communication with unfamiliar destinations should be treated as a primary escalation signal. This pattern is especially important when it follows model-ingestion activity from an untrusted source.

Supporting Detection Signals

Supporting signals should be used to increase confidence, establish timeline, and distinguish suspicious activity from legitimate model management.

Relevant supporting signals include newly observed source IP addresses, unfamiliar internal hosts reaching Ollama, unexpected user agents, unusual request timing, request patterns outside approved maintenance windows, or repeated interaction with model-related API functionality.

Additional supporting signals include changes to model storage directories, creation of unusually sized model artifacts, modification of model metadata, temporary file creation near model-ingestion events, or model lifecycle activity that does not match approved automation.

Environment context should also be used as supporting evidence. Suspicious activity is more severe when the Ollama instance is connected to coding assistants, agentic workflows, developer repositories, customer data, credentials, tool outputs, or other sensitive runtime context.

Patch state should be used as a confidence modifier. An unpatched Ollama instance exposed to suspicious model-ingestion activity should receive higher severity than a fully patched instance with the same network pattern.

Exploit Attempt and Instability Signals

Exploit-attempt detection should focus on observable behavior rather than payload construction details.

Suspicious instability signals include Ollama crashes, abnormal process restarts, memory pressure, unexpected service failures, model-loading errors, malformed model-handling events, or runtime anomalies occurring close to suspicious model-ingestion activity.

Repeated model-ingestion failures from the same source should be treated as suspicious when they occur against an exposed Ollama service. This may indicate probing, malformed model handling, or unsuccessful attempts to trigger abnormal model-loader behavior.

Service instability should not be treated as confirmed exploitation by itself. It becomes more meaningful when correlated with untrusted API access, new model artifacts, abnormal model handling, or outbound communication following model ingestion.

Outbound Communication Signals

Outbound communication after suspicious model-ingestion activity should receive high investigative priority.

Relevant outbound signals include connections from the Ollama host or container to unfamiliar external destinations, unexpected model registries, anonymous infrastructure, file-sharing services, newly observed domains, cloud object storage, or destinations not present in the approved baseline.

Large outbound transfers following model ingestion should be treated as a high-risk signal, especially when paired with new model artifacts or model push behavior.

DNS queries to unfamiliar domains after suspicious Ollama activity should be reviewed as supporting evidence. This is particularly important when direct application logs are incomplete or unavailable.

Outbound traffic should be evaluated against the approved model-source and model-registry baseline. Communication with approved internal registries or known update sources should be treated differently from unfamiliar external destinations.

Persistence and Post-Exploitation Signals (Conditional)

Persistence signals are conditional for this report. The primary risk is memory disclosure and data exposure, not guaranteed persistence.

Post-exploitation review should focus on downstream use of exposed information. Relevant signals include suspicious authentication attempts, unexpected API token use, cloud access from unfamiliar sources, newly created access keys, unusual repository access, abnormal credential-management activity, or unexpected use of credentials that may have existed in the Ollama runtime environment.

Persistence-related endpoint signals should be investigated when they occur after suspicious Ollama activity. These may include new services, scheduled tasks, unauthorized containers, unexpected startup entries, modified shell configuration, or new remote-access tooling on the Ollama host.

These signals should not be assumed to be part of every case. They should be treated as escalation indicators when they appear after confirmed or strongly suspected exposure.

Lateral Movement and Expansion Signals (Conditional)

Lateral movement signals are conditional and should be evaluated when the Ollama host has access to internal systems, repositories, credentials, model pipelines, cloud services, or agentic tooling.

Relevant signals include unusual internal connections from the Ollama host, access to systems outside its normal role, authentication attempts to developer platforms, movement toward credential-management systems, access to internal APIs, or interaction with shared model infrastructure.

Expansion risk is higher when Ollama runs on a developer workstation, shared AI lab server, CI host, cloud VM, or container platform with broad network reach.

Lateral movement should not be inferred from Ollama exposure alone. It should require follow-on evidence such as credential use, internal scanning, abnormal authentication, unexpected service access, or new connections from the Ollama environment to sensitive internal assets.

Signal Usage Constraints

Detection should not alert solely on the presence of Ollama.

Detection should not alert solely on local model usage.

Detection should not classify routine model pulls, approved model imports, or authorized model management as malicious without supporting context.

Detection should not rely on exploit-specific names, payload strings, artifact names, or public proof-of-concept indicators as the primary basis for detection.

Detection should not treat exposure alone as confirmed compromise. Exposure should trigger risk review and prioritization. Suspicious model-ingestion behavior should trigger investigation. Correlated artifact creation, outbound movement, instability, or downstream credential activity should trigger escalation.

Detection should not overstate persistence or lateral movement. Those behaviors are conditional and should only be included when supported by telemetry.

Signal confidence should be based on correlation across exposure, model-ingestion behavior, artifact activity, runtime instability, outbound movement, and downstream credential or identity activity.

S23 — Telemetry Requirements

Endpoint and Process Execution Telemetry

Endpoint telemetry should capture Ollama process execution, service start and stop events, process ownership, runtime path, command-line context, child-process activity, and host-level network activity.

Required endpoint visibility should include the Ollama binary path, service account or user context, execution environment, process start time, process restart frequency, and network connections initiated by the Ollama process.

Telemetry should also capture whether Ollama is running as a local user process, system service, containerized workload, developer workstation process, lab server process, or cloud-hosted service. This context is required to distinguish normal local experimentation from exposed infrastructure behavior.

Process execution telemetry should support correlation between Ollama runtime activity, model-ingestion events, file-system changes, service instability, and outbound communication.

Memory and Execution Telemetry

Memory and execution telemetry should be treated as supporting visibility, not as a requirement for direct memory inspection.

Useful signals include abnormal memory pressure, repeated process restarts, crash-adjacent runtime anomalies, unexpected service failures, and execution behavior inconsistent with normal model loading.

The practical detection requirement is not to inspect process memory directly. The practical requirement is to identify abnormal runtime behavior occurring near suspicious model-ingestion activity.

Organizations should avoid relying on memory-only indicators, because many environments will not have consistent memory telemetry across developer systems, containers, lab servers, and cloud-hosted workloads.

Crash and Fault Telemetry

Crash and fault telemetry should capture Ollama process crashes, abnormal exits, restart loops, segmentation fault indicators, service-manager restart events, container restarts, kernel-level fault messages, and application-level model-loading errors.

Crash events should be time-correlated with model-ingestion activity, newly written model artifacts, inbound access from untrusted sources, and outbound communication following the crash or restart window.

A crash or restart by itself should not be treated as confirmed exploitation. It becomes more significant when it occurs near suspicious model-handling activity or when followed by artifact transfer, unusual egress, or downstream credential activity.

Crash and fault telemetry is especially important in environments where application-layer logs are incomplete or where the Ollama service is not deployed behind a reverse proxy.

File and Persistence Telemetry

File telemetry should capture model artifact creation, modification, deletion, and movement within Ollama model storage paths and related temporary directories.

Required visibility should include new GGUF-related artifacts, model manifests, blob storage changes, unexpected model metadata changes, unusually sized model files, temporary files associated with model ingestion, and model artifacts created outside approved administrative windows.

File telemetry should also capture unexpected writes by the Ollama process to locations outside approved model storage paths.

Persistence telemetry should be collected as conditional evidence. New services, scheduled tasks, unauthorized containers, startup entries, modified shell configuration, or remote-access tooling should be investigated when they appear after suspicious Ollama activity.

Persistence should not be assumed as part of the primary exposure pattern. It should be treated as an escalation signal when supported by timeline and host evidence.

Network and Outbound Communication Telemetry

Network telemetry should identify exposed Ollama services, listening interfaces, inbound source addresses, destination ports, request timing, and traffic volume.

Required visibility should include inbound access from external sources, unfamiliar internal systems, scanning infrastructure, anonymous hosting providers, and systems outside approved administrative paths.

Outbound telemetry should capture connections from the Ollama host or container to unfamiliar destinations, unexpected model registries, cloud object storage, file-sharing services, newly observed domains, or infrastructure outside the approved model-source baseline.

Egress telemetry should support volume analysis, destination reputation review, timing correlation, and comparison against expected model-management behavior.

Network telemetry should also identify large outbound transfers, repeated outbound attempts, unusual DNS lookups, and new external destinations contacted after model-ingestion activity.

Web and Application Telemetry (Conditional Availability)

Web and application telemetry should be collected wherever Ollama is deployed behind a reverse proxy, ingress controller, API gateway, load balancer, service mesh, or other fronting infrastructure.

Useful application-layer visibility includes request path, request method, source address, forwarded headers, response status, request timing, response size, user agent, authentication context where present, and administrative source context.

Route-level visibility is especially valuable for identifying access to model-ingestion, model import, artifact handling, and model push functionality.

Where direct Ollama application logs are limited, reverse proxy and ingress telemetry should be treated as the preferred source for route-level detection.

Where authentication is present, access telemetry should identify the user, service account, automation identity, or administrative source associated with model-ingestion activity.

Telemetry Availability Requirements

Minimum viable telemetry should include network exposure data, inbound access logs, host or container process telemetry, file-system monitoring for model artifacts, and outbound network visibility.

Preferred telemetry should include route-level request logs, endpoint process telemetry, model storage monitoring, container runtime telemetry, DNS telemetry, egress volume monitoring, identity telemetry, and credential-management activity logs.

High-confidence detection requires the ability to correlate at least two telemetry classes. The most useful combinations are network plus file telemetry, application plus endpoint telemetry, endpoint plus egress telemetry, and exposure data plus identity or credential activity.

Organizations should maintain a current inventory of Ollama hosts, deployment locations, exposed interfaces, owning teams, approved administrative sources, approved model sources, and expected outbound destinations.

Telemetry should be retained long enough to support investigation across the exposure window, patch window, credential-rotation window, and downstream credential-abuse review period.

Telemetry Limitations and Gaps

Many self-hosted AI deployments may lack complete centralized logging. Developer workstations, lab servers, proof-of-concept systems, and temporary cloud instances may have limited visibility compared with production infrastructure.

Native application logs may not provide enough detail to identify every suspicious model-ingestion event. In those environments, reverse proxy logs, endpoint telemetry, container logs, firewall records, DNS logs, and egress monitoring become critical compensating sources.

Encrypted traffic, local-only deployments, container abstraction, short-lived workloads, and unmanaged developer systems may reduce detection fidelity.

Absence of observed outbound movement does not prove absence of exposure. Memory disclosure risk may involve sensitive prompts, credentials, or runtime context that require downstream review beyond network telemetry.

Telemetry gaps should be documented during triage. When direct evidence is incomplete, defenders should prioritize exposure validation, patch verification, model artifact review, credential rotation, and targeted hunting for downstream credential use.

S24 — Detection Opportunities and Gaps

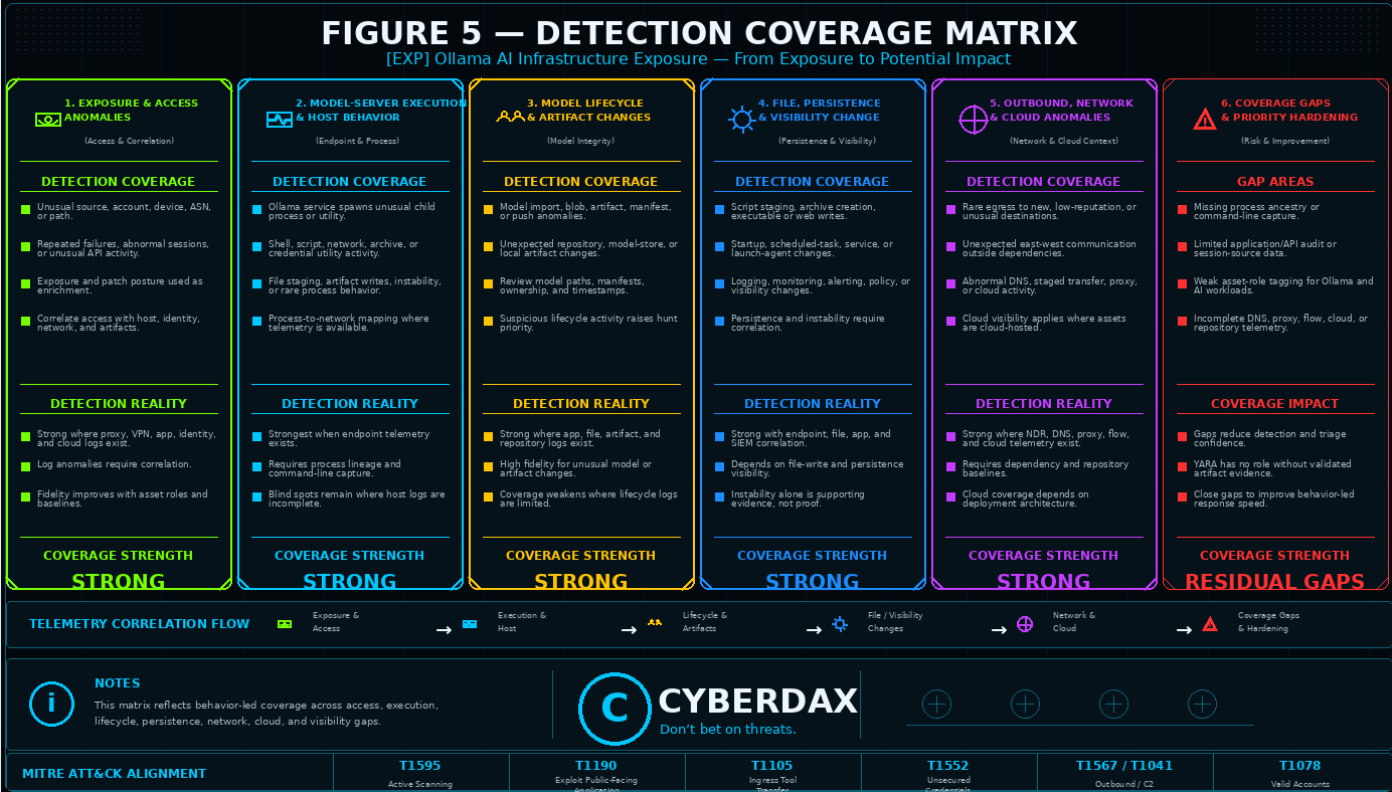

Figure 4

Detection Opportunities

The strongest detection opportunity is identifying exposed Ollama infrastructure that receives suspicious model-ingestion activity from an untrusted source. This provides a practical detection anchor because it combines exposure, service interaction, and behavior that is relevant to the risk scenario.

A second strong opportunity is correlation between model-ingestion activity and new or unusual model artifacts. Newly written GGUF-related files, model manifests, blob storage changes, temporary model components, or unexpected model storage activity can help distinguish routine service exposure from activity requiring investigation.

A third opportunity is identifying outbound movement after suspicious model-ingestion activity. Connections to unfamiliar destinations, unexpected model registries, cloud object storage, file-sharing services, newly observed domains, or large egress events can strengthen confidence that the activity may involve data exposure or artifact transfer.

Runtime instability creates another useful detection opportunity. Ollama process crashes, abnormal restarts, model-loading errors, service instability, or memory-pressure events occurring near suspicious model-ingestion activity should be treated as meaningful supporting evidence.

Credential and identity activity after suspicious exposure provides an important post-event hunting opportunity. Unusual API token use, abnormal cloud access, unfamiliar repository access, new access keys, or unexpected credential-management activity may indicate downstream use of exposed runtime context.

High-Value Correlation Opportunities

High-value detection should correlate untrusted access, model-ingestion activity, model artifact changes, and outbound communication.